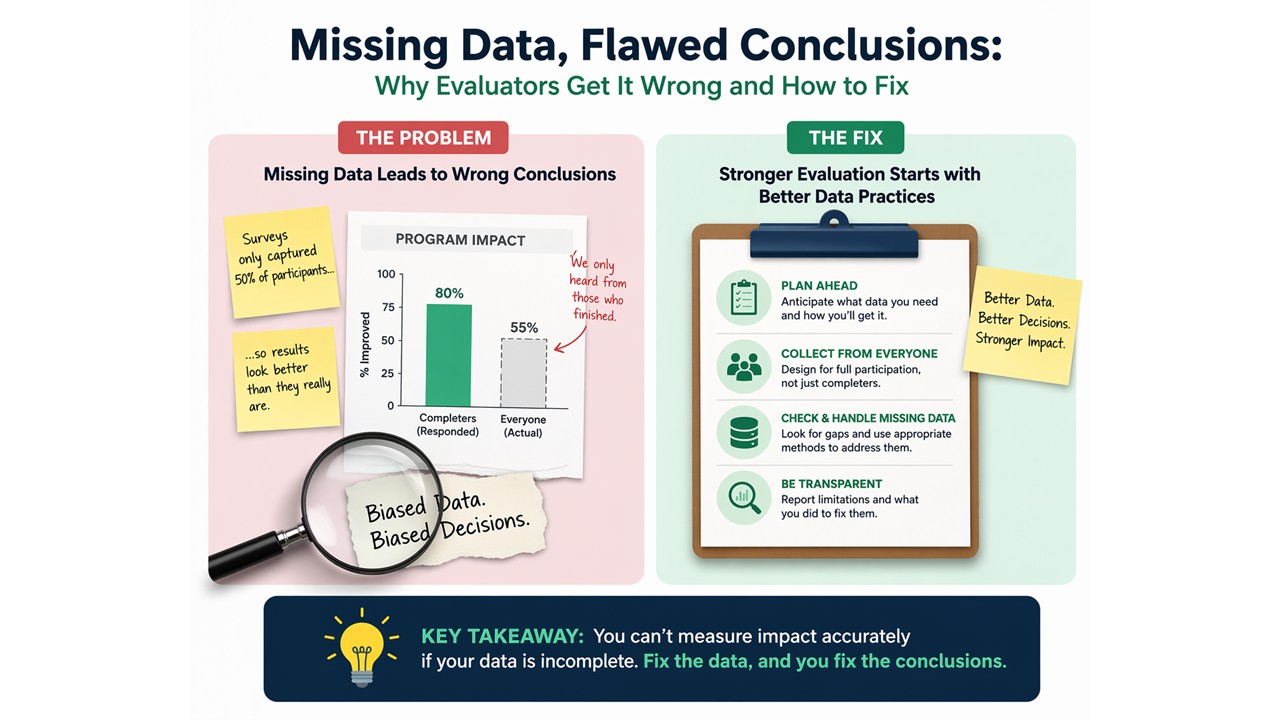

In monitoring and evaluation (M&E), everything rests on the quality of the data, yet missing data is still routinely brushed aside as a minor issue. It shows up in almost every dataset, whether through skipped questions, sensitive items like income, or simple mistakes during data collection, and if it is not handled properly, it can quietly distort the results and the conclusions drawn from them

In monitoring and evaluation (M&E), everything rests on the quality of the data, yet missing data is still routinely brushed aside as a minor issue. It shows up in almost every dataset, whether through skipped questions, sensitive items like income, or simple mistakes during data collection, and if it is not handled properly, it can quietly distort the results and the conclusions drawn from them.

Respondents may skip questions, refuse to answer sensitive items such as income, or enumerators may accidentally leave fields blank during data collection. How missing data is handled has direct consequences for the validity of an evaluation. Poor handling can introduce bias, reduce the strength of the analysis, and lead to conclusions that do not accurately reflect program performance.

Improper treatment of missing data can lead to biased estimates, reduced statistical power, and misleading conclusions about program effectiveness. For evaluators and researchers, managing missing responses is therefore not only a technical issue but also a critical aspect of ensuring the reliability of evaluation findings.

The approaches discussed here connect practical data management to the broader evaluation methods, tools, and technologies that strengthen evidence-based decision-making.

Why Missing Data Matters

Survey-based evaluations often aim to measure outcomes such as household income, education levels, access to services, or behavioral changes. When responses are missing, the available data may no longer represent the population accurately.

For example, imagine a household income survey where higher-income respondents are less willing to report their income. If these observations are missing, the calculated average income may appear lower than it actually is. In evaluation terms, this introduces bias, which can distort conclusions about program performance.

Missing responses can also reduce the effective sample size. When cases with incomplete data are excluded from analysis, the statistical power of the evaluation decreases. This makes it harder to detect real program effects.

Because of these risks, managing missing data is an essential part of evaluation methodology and data analysis.

Understanding Why Data Is Missing

Before deciding how to handle missing responses, evaluators should first understand why the data is missing. In evaluation research, missing data are generally classified into three categories.

- Missing Completely at Random (MCAR) occurs when missing responses happen purely by chance. For example, an enumerator may accidentally skip a question. In such cases, the missingness is unrelated to the characteristics of the respondent.

- Missing at Random (MAR) occurs when missingness is related to other observed variables in the dataset. For instance, younger respondents may be less likely to report their income, meaning that missing income responses are related to age.

- Missing Not at Random (MNAR) occurs when the missingness is directly related to the value itself. For example, respondents with very high incomes may deliberately avoid reporting their income.

In practice, this is the point where analytical choices begin to diverge. The same dataset can be treated very differently depending on what is driving the missingness, and those decisions have a direct impact on the results.

MNAR in Sensitive Financial Data – Livestock Enterprises

While evaluating a GAC-funded programme supporting small enterprises in the livestock sector, in South Punjab, Pakistan, I encountered a consistent pattern of missing data around business profits and outstanding debt. A noticeable proportion of respondents declined to answer these questions.

During fieldwork and follow-up qualitative discussions, it became clear that this was not random. Two groups were particularly reluctant:

- Those earning relatively higher profits, who were cautious about disclosing income due to concerns around taxation or fear of being excluded from project support, and

- Those facing financial stress or debt, who were uncomfortable sharing negative business performance and feared losing access to second and third tranches of loans and grants under the project.

This pointed to a Missing Not at Random (MNAR) situation, where the likelihood of non-response was directly linked to the underlying value of the variable itself i.e. the business financial status.

Given this, I avoided applying standard imputation techniques, as they would have likely distorted the results. Instead, I adapted the approach during analysis by:

- using income and profit ranges rather than exact figures,

- relying on proxy indicators such as herd size, sales turnover patterns, and input expenditure, and

- triangulating findings with qualitative interviews.

Practical Methods for Handling Missing Data

Evaluation practitioners have several options for dealing with missing responses. These approaches range from simple techniques used in basic descriptive analysis to advanced statistical methods applied in rigorous impact evaluations.

- Complete Case Analysis

When the proportion of missing data is small, analysts sometimes rely on complete case analysis, where only fully observed records are used. This approach is straightforward and often applied by default in statistical software. However, it is only appropriate when the missingness is limited and unlikely to affect the results in a systematic way..

- Simple Replacement Methods

Several straightforward techniques are commonly used in applied evaluation work.

Mean substitution replaces missing values with the average of the observed data. While easy to implement, it reduces variability in the dataset and can weaken relationships between variables, so it should be used with caution.

Another common approach is hot deck imputation, where evaluators replace missing values with observed values from similar respondents in the dataset. This technique is widely used in large household surveys because it preserves the distribution of the original data.

Cold deck imputation uses external reference data, such as national surveys or administrative statistics, to estimate missing values.

- Model-Based Statistical Approaches

When missing data is moderate or when evaluations involve statistical modeling, more robust techniques are recommended.

Regression imputation predicts missing values using relationships between variables. For example, missing income values may be estimated using age and education as predictors.

The Expectation–Maximization (EM) algorithm estimates model parameters iteratively in the presence of missing data, helping retain the overall structure of the dataset.

These methods help maintain the structure of the data and reduce the risk of biased results.

- Advanced Techniques for Complex Evaluations

In complex evaluation studies or large datasets, advanced imputation techniques are often preferred.

Multiple imputation is widely considered one of the most reliable approaches. Instead of filling missing values with a single estimate, this method creates multiple plausible datasets, analyzes each one separately, and combines the results using pooling rules that account for both within- and between-imputation variance, thus capturing the uncertainty associated with missing data.

Bayesian imputation applies probabilistic modeling to estimate missing values using probability distributions. It is particularly useful when evaluators need to incorporate prior knowledge or external data into the estimation process, making it well suited for contexts where historical survey data or expert assumptions can inform missing value predictions. Although technically advanced, researchers increasingly apply it in large-scale statistical analyses.

Bayesian imputation uses probability models to estimate missing values by combining observed data with prior information. In practice, this approach becomes useful when the evaluator has access to reliable prior data—for example, from baseline surveys, routine monitoring systems, or earlier evaluation rounds—or when expert assumptions need to be formally incorporated into the analysis. So, the Bayesian imputation is better suited for cases where prior knowledge is strong and can be justified, rather than as a default approach.

These advanced techniques are particularly relevant for impact evaluations, econometric analysis, and large national surveys.

The Role of Evaluation Tools and Technologies

Modern evaluation increasingly relies on digital tools and statistical technologies to manage complex datasets.

Software platforms such as SPSS, Stata, R, and Python allow evaluators to automate missing data analysis and apply advanced imputation techniques efficiently. These tools can identify patterns of missingness, generate predicted values, and perform multiple imputation procedures across thousands of observations.

Digital data collection platforms such as KoboToolbox, SurveyCTO, and ODK also help reduce missing responses by incorporating built-in validation rules, skip logic, and mandatory fields during data collection.

By combining sound evaluation methods with modern analytical tools and technologies, evaluators can significantly improve data quality and analytical reliability.