Over the past two decades, the evaluation field has significantly strengthened its technical quality. Methodological standards have improved, mixed-methods approaches are now widely used, and expectations around rigor and transparency have grown.

Over the past two decades, the evaluation field has significantly strengthened its technical quality. Methodological standards have improved, mixed-methods approaches are now widely used, and expectations around rigor and transparency have grown. Yet one challenge continues to surface across the evaluation field: even technically strong evaluations, built on solid methods and careful analysis, continue to have limited influence on decision-making.

Across development, humanitarian, and environmental programming, evaluation reports are delivered, formally acknowledged, and sometimes even praised. Yet their findings rarely shape strategic choices, resource allocation, or program redesign. As Munyayi (2025) observes, this pattern reflects a broader challenge in evaluation practice: reports often fulfill accountability requirements but have little influence on the decisions that follow.

This gap points to a deeper question. Why do technically strong evaluations so often fail to shape the decisions they are meant to inform?

Drawing on experience across evaluations, monitoring, and learning systems, I see evaluation influence not simply as a technical outcome, but as something shaped by how evaluations interact with organizational decision-making processes. Four recurring factors help explain why even well-executed evaluations often struggle to matter.

Misalignment Between Evaluation Timing and Decision Cycles

One of the most common barriers to evaluation is timing. Findings often arrive after key decisions have already been made. By the time evaluation results are presented, budgets may be finalized, program designs approved, or donor commitments secured. Evaluations are expected to inform decisions that are no longer open to reconsideration.

This misalignment rarely stems from evaluators alone. In many organizations, evaluation timelines are driven more by contractual obligations or reporting requirements than by the moments when decisions are actually made. As a result, evaluations are often produced to satisfy accountability requirements rather than to inform decisions that are still open.

When evaluations arrive after decisions windows are effectively closed, even well-substantiated findings struggle to gain traction. Influence depends on proximity to decision moments, not simply on adherence to evaluation timelines.

Designing Evaluations for Questions Instead of Decisions

A second factor lies in how evaluations are framed at the design stage.Evaluation designs often begin with learning questions: what do we want to know about relevance, effectiveness, or impact? While these questions are important, they are frequently developed without clear consideration of how findings will be used in practice.

- Who are the primary users of the evaluation?

- What decisions are they expected to inform?

- When will those decisions be made?

When these questions are left implicit, evaluations may generate insights that are analytically sound but difficult to apply in practice. Findings may be interesting, even compelling, yet still fail to support concrete choices such as whether to adapt an intervention, scale an approach, or discontinue a strategy.

In these situations, evaluation findings must compete with other drivers of decision-making, including political considerations, budget constraints, and organizational priorities. Without a clear link to specific decision needs, even strong evidence can struggle to gain traction.

When Evaluation Influence Is Treated as an Event, Not a Process

Evaluation influence is often treated as something that happens at the end of the evaluation cycle, typically through dissemination workshops, presentations, or final reports.

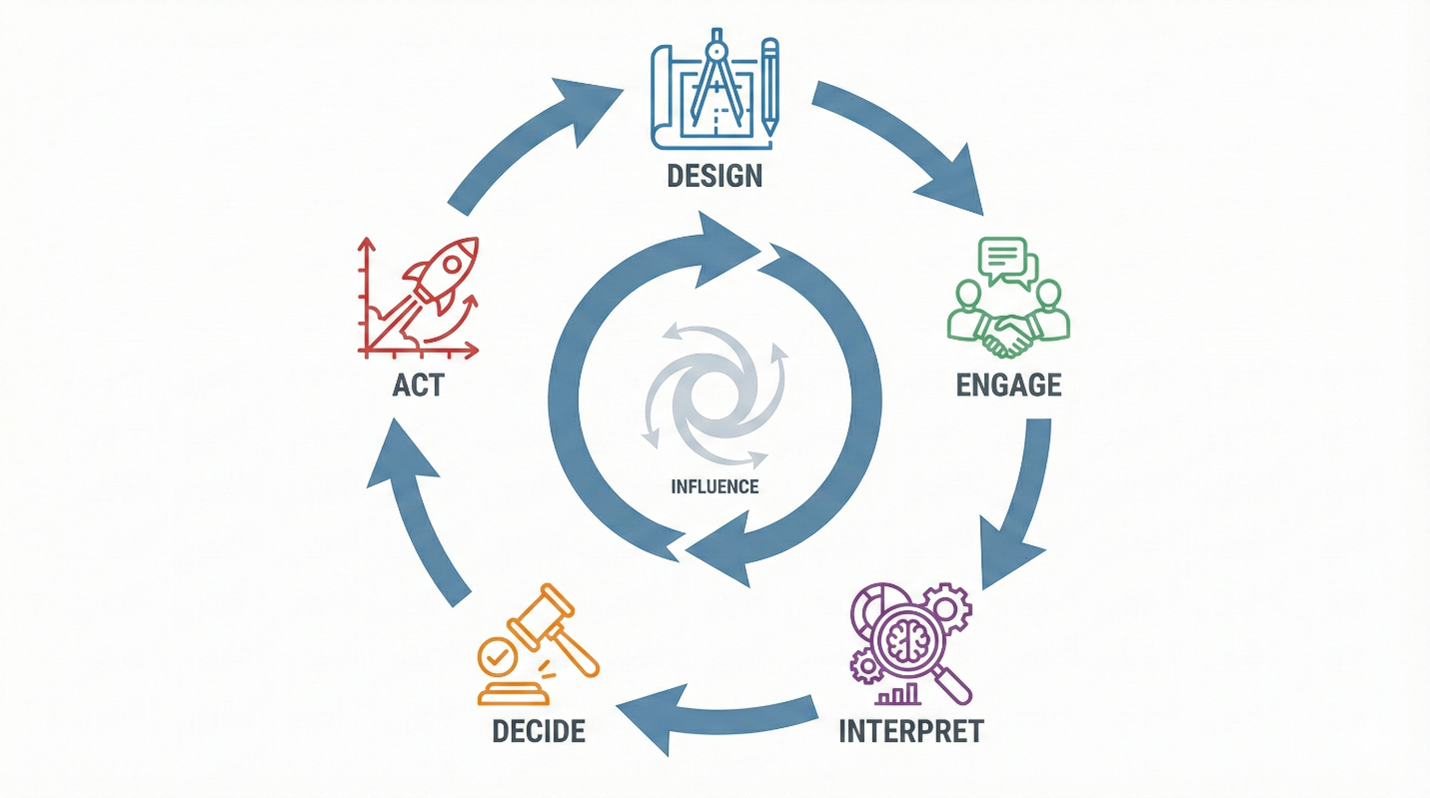

The assumption is that once findings are formally shared, decision-makers will absorb them and act accordingly. In practice, influence rarely works this way. More often, influence develops gradually through ongoing process of engagement, interpretation, and dialogue.

When evaluation findings are delivered as static products rather than as part of a broader process of reflection and discussion, they are easier to acknowledge and just as easy to set aside. By contrast, evaluations that shape decisions tend to involve stakeholders throughout process, creating shared understanding and ownership of findings well before recommendations are finalized.

This perspective aligns with utilization-focused evaluation, which emphasize participation and iterative interpretation as key conditions for use (Patton, 2008).

Organizational Incentives That Discourage Evaluation Use

Evaluation findings are interpreted within organizational systems that shape how evidence is received and acted upon.

In many contexts, such findings can carry risks. They may reveal underperformance, challenge established strategies, or complicate existing reporting narratives about program success. Where accountability systems prioritize compliance, upward reporting, or reputational management, decision-makers may have limited incentives to engage deeply with evidence that introduces uncertainty or discomfort.

In these settings, limited evaluation use is not simply a technical problem. It reflects broader organizational and political dynamics that constrain how evidence can be discussed and acted upon. Such dynamics are often visible to evaluators but difficult to address directly, particularly when evaluation mandates emphasize delivery of reports rather than learning.

What it Means for Evaluation Practice

These patterns suggest that strengthening evaluation influence requires more than continued investment in technical rigor.Evaluations are most likely to inform decisions when attention is given not only to methodological quality, but also to how evaluations are positioned within decision processes.

In practice, this means paying closer attention to several conditions :

- alignment between evaluation timing and decision cycles,

- clear articulation of the decisions the evaluation is intended to inform

- ongoing facilitation and sensemaking throughout the evaluation process, and

- the organizational incentives that shape how evidence is received and used.

For evaluators, this may mean moving beyond the role of evidence producers toward actively shaping the conditions that allow evidence to inform real choices.

References

Munyayi, A. (2025). From shelf-ware to action: Unpacking the utilization of project evaluation reports in Zimbabwe’s development sector. International Journal of Research and Scientific Innovation. https://doi.org/10.51244/IJRSI.2025.12050096

Patton, M. Q. (2008). Utilization-focused evaluation (4th ed.). Sage Publications.

World Bank Independent Evaluation Group. (2014). Learning and results in World Bank operations: Toward a new learning strategy. World Bank.